How to run Python serverless applications on the Google Cloud Platform

Discover three different ways of running serverless applications on GCP: App Engine, Cloud Run and Cloud Functions

I've used the Google Cloud Platform extensively in the last years and, as a cloud platform, is one of the most enjoyable you can work with. It really helps you focus on what matters, whether you are building an MVPs, a data ingestion pipeline, a single function or full-fledged style application.

Let's focus on the serverless offer and discover how you can run Python serverless applications on top of GCP.

There are 5 different ways in which you can deploy a Python application on GCP:

- A virtual machine (Compute Engine)

- A Kubernetes cluster (GKE)

- App Engine

- Cloud Functions

- Cloud Run

App Engine, Cloud Functions and Cloud Run are 3 different ways to deploy serverless workloads on GCP.

What does serverless mean in this context?

- You don't need to pre-allocate resources (like number of machines)

- You pay (almost) zero if you don't receive any request

- It can scale up and down (to zero instances/functions) automagically

Before starting: configure the gcloud sdk

We are going to use the gcloud sdk, so it's better to have it setup properly:

- A valid GCP account with a project

- The gcloud sdk installed

- You need to configure the gcloud sdk, these are the magic lines you need to run:

# You can use whatever you like for CONFIGURATION-NAME

gcloud config configurations create {CONFIGURATION-NAME}

# the project id comes from GCP

gcloud config set project {PROJECT-ID}

# This command will open a browser tab

gcloud auth login

# Check if everything works

gcloud config configurations describe {CONFIGURATION-NAME}- You are ready!

App Engine Standard Environment

App Engine has two versions, standard and flexible environment, here we are focusing on the standard version.

If you are a fan of Heroku, App Engine is the closest experience you can get inside GCP.

You need an app.yaml, a requirements.txt and then you are ready to go.

There's a repository with a runnable example, with a few things to notice:

- I use Poetry as dependency management tool and I export the requirements as

requirements.txt - There's an

app.yamland.gcloudignore

The app.yaml

The app.yaml needs at least two entries: runtime and entrypoint.

App Engine supports both Python 3.7 and Python 3.8, so you can select the runtime you want to use.

The entrypoint is the command that you want to run (like web in a Heroku procfile).

runtime: python38

entrypoint: gunicorn main:app -w 4 -k uvicorn.workers.UvicornWorkerCreate and deploy an App Engine application

After you cloned the repository there are only a few commands between having the application running locally and on App Engine

# You'll have to select a region and you can't change it afterwards

gcloud app create

gcloud app deployJust wait a couple of minutes and your application should be running online. Pretty neat, isn't it?

Performance

I run some load tests using hey with 5000 requests (hey-n 5000 URL) and I got some really nice numbers. The application is not doing much, so I am basically testing the overhead of the GCP infrastructure

Summary:

Total: 5.4324 secs

Slowest: 0.3870 secs

Fastest: 0.0273 secs

Average: 0.0517 secs

Requests/sec: 920.3966Sweet!

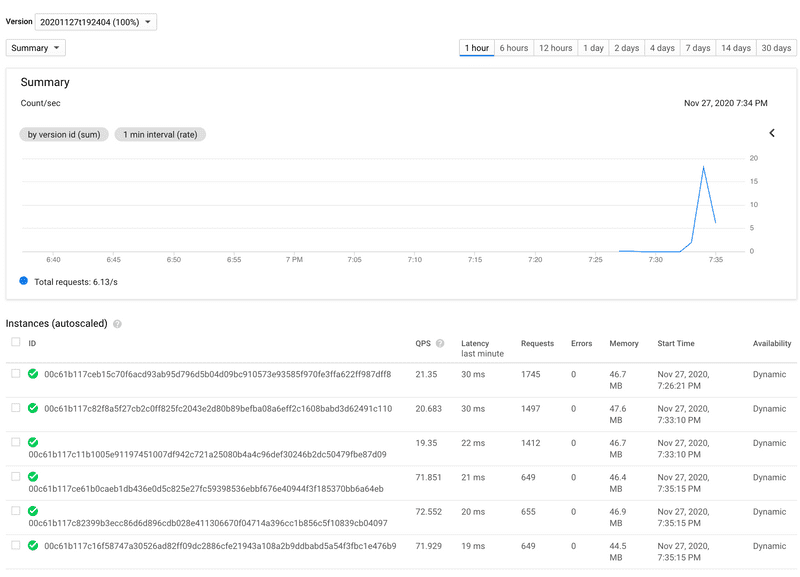

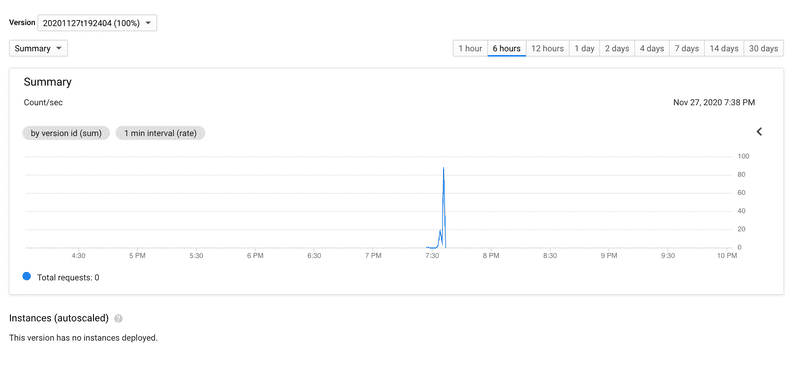

It's also nice to see how the number of instances scales up and down.

Cloud Functions

Cloud functions are the closest thing you can get inside the GCP ecosystem to AWS Lambda, with a key difference:

- You can trigger your cloud function directly, HTTP endpoints (triggers) are available out of the box

Google recently announced the GCP API Gateway, now you can have the AWS Lambda & API Gateway experience also on GCP.

You can trigger Cloud Functions with events (Cloud Storage, Pub/Sub, etc...) or by calling them directly.

Let's deploy a cloud function, you can find a runnable example here.

There is one main requirement: you need to have a requirements.txt and a main.py on your base path

gcloud functions deploy movie-recommender \

--entry-point recommend_movie \

--runtime python38 \

--trigger-http \

--allow-unauthenticated \

--region=europe-west1--allow-unauthenticatedmakes your API endpoint public.--entry-pointspecifies the function you want to call inside themain.py.

Cloud Functions triggered by HTTP are based on Flask handlers, so our function receives a request object and needs to return something compatible with make_reponse.

Performance wise they are also good

Summary:

Total: 10.5700 secs

Slowest: 4.6717 secs

Fastest: 0.0407 secs

Average: 0.0779 secs

Requests/sec: 473.0385Cloud Run

Cloud Run is a recent new addition to the serverless GCP suite, it uses knative to run serverless workloads on top of a Kubernetes cluster.

That means you can run Cloud Run on your GKE cluster or on a cluster managed directly by Google.

Cloud Run offers a few pluses compared to App Engine and Cloud Functions:

- You deploy containers

- One single instance can handle multiple requests at the same time

- Supports gRPC

- You are not limited by the supported languages or versions

In case you want to play with Cloud Run you can find (another) runnable example.

To deploy an application on top of Cloud Run you only need a docker image that can be pulled by the Cloud Run service.

It can be a publicly available image, or an image hosted on the GCP Container Registry.

In my case I decided to use the GCP Build service and push the image to the GCP Container registry.

After you cloned the repository you can run this command

gcloud builds submit --tag eu.gcr.io/$YOUR_GCP_PROJECT/movie-recommenderThis command will build and push the docker image to the GCP Container Registry.

Then we are ready to deploy the Cloud Run service

gcloud run deploy movie-recommender \

--image eu.gcr.io/$YOUR_GCP_PROJECT/movie-recommender \

--platform managed \

--allow-unauthenticated \

--region europe-west1 \

--args=gunicorn,main:app,-w,4,-k,uvicorn.workers.UvicornWorkerLet's explain each flag:

--allow-unauthenticated: same as cloud functions, enable a publicly reachable URL--platform: running on a Kubernetes cluster managed by Google--region: region in which I want to run my cloud run--args: this is the command I want to run inside the container

And because we love benchmarks:

Summary:

Total: 5.7388 secs

Slowest: 0.2160 secs

Fastest: 0.0386 secs

Average: 0.0554 secs

Requests/sec: 871.2571Again really nice results.

Is serverless always the right answer?

No, leaving cost aside there are certain things you can't do or can't do well:

- Websockets, GraphQL subscriptions and streaming are limited by the request timeout

- Headers and Payload size are also limited

- You can't create shiny network topologies and decide how requests are routed, you need to build on top of the GCP integrations (and limits)

- You need to be careful if you access services where the number of concurrent clients is limited, a good example is services connecting to PostgreSQL

Takeaways

App Engine, Cloud Run and Cloud functions all come with a pretty good free tier and you pay only when you use them.

They all support private VPC connections through Serverless VPC access. Serverless VPC access is really neat, you can build complex topology on serverless stack, like mixing virtual machines and serverless applications inside the same network.

The lock-in factor is also pretty low, especially for App Engine and Cloud Run.

Being full-fledged applications you can pack them into containers and run them somewhere else.

They are also well integrated with the Stackdriver products (now Operations), that means you get centralized log and monitoring out of the box.

And yes, you can use structlog and get you json logs parsed, just remember to convert level to severity (check this snippet).

App Engine and Cloud Run both support traffic splitting between different revisions, so you get A/B testing or canary deployment for free.

If I had to choose one.... I'd go with Cloud Run.

Cloud functions are also great but they force you writing web applications in a way that I don't like.

But I do love using Cloud Functions for event-based systems, data ingestion or pipeline based on events.

Useful resources

Boost your Python and DevOps skills

Get great content on Python, DevOps and cloud architecture.

And if you don't like what I share you can always opt-out.